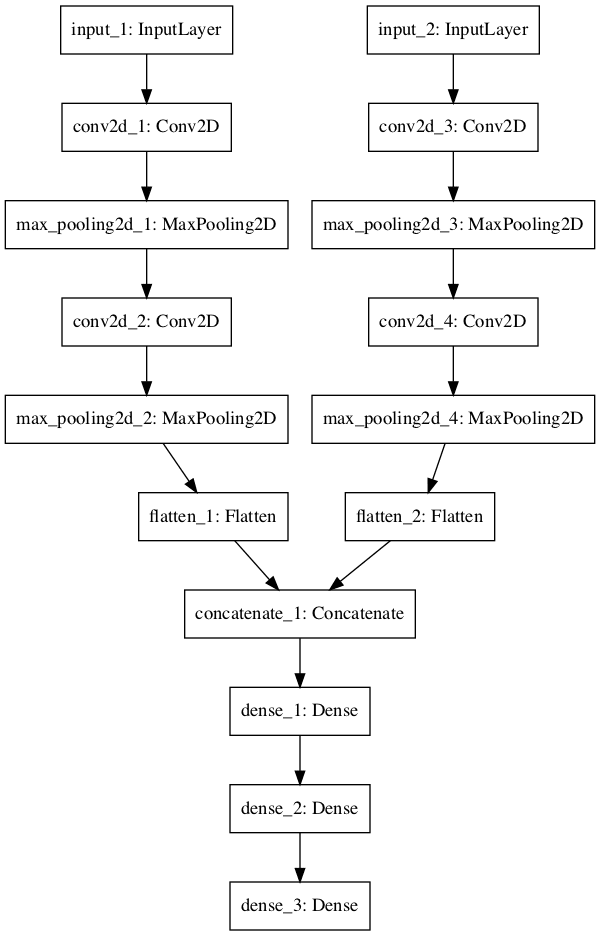

Deep multiblock predictive modelling using parallel input convolutional neural networks - ScienceDirect

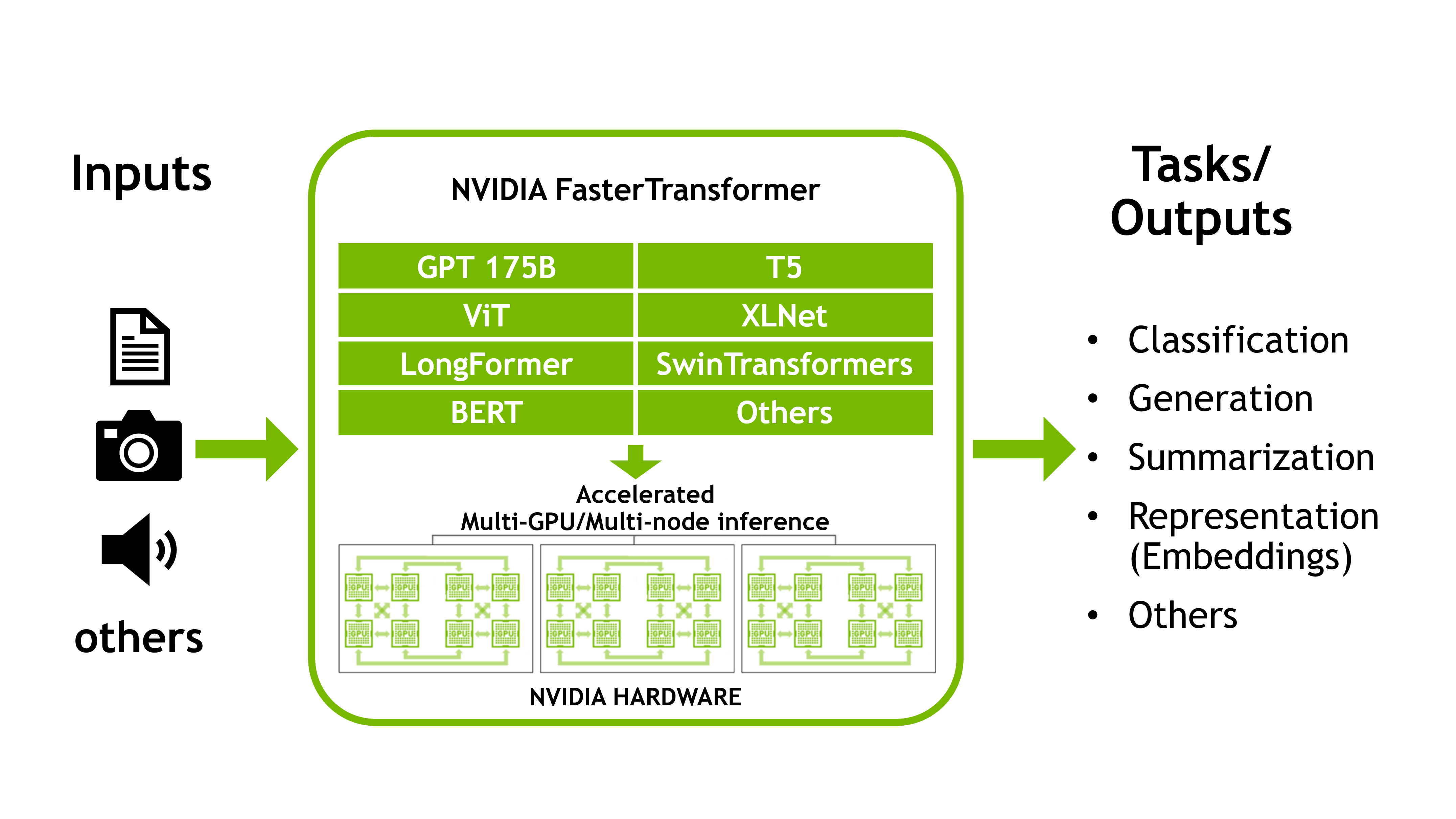

Accelerated Inference for Large Transformer Models Using NVIDIA Triton Inference Server | NVIDIA Technical Blog

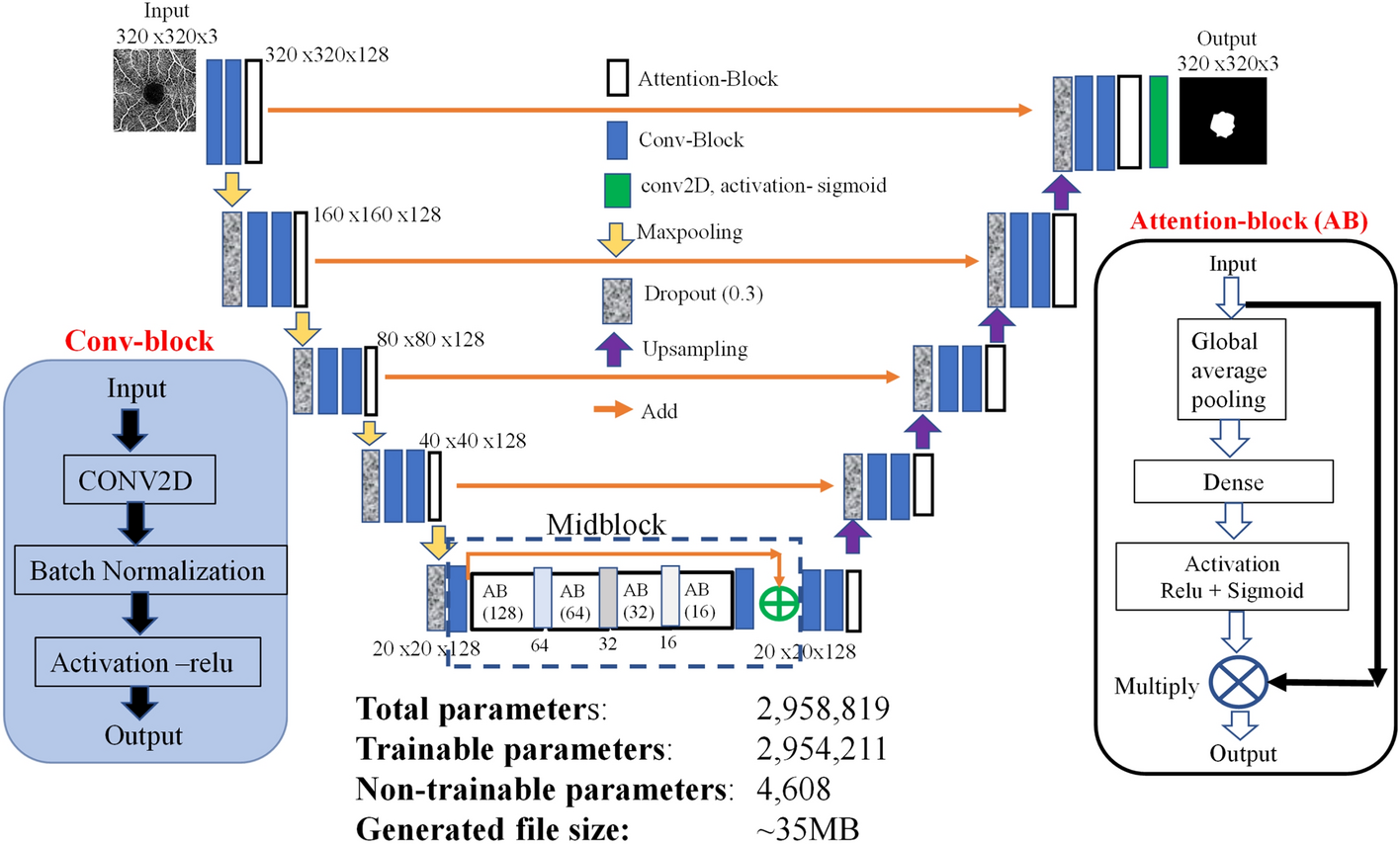

A lightweight deep learning model for automatic segmentation and analysis of ophthalmic images | Scientific Reports

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

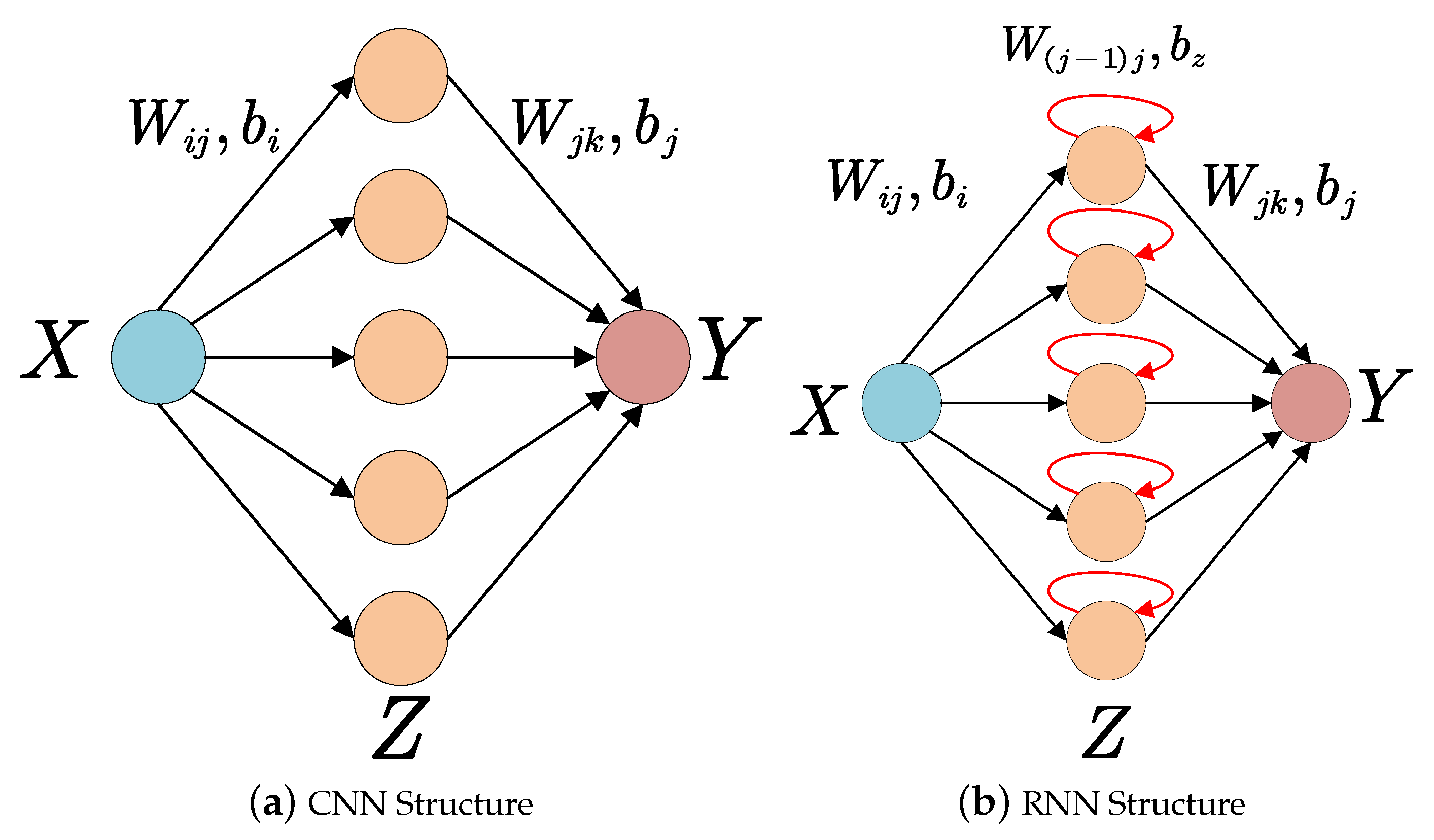

Electronics | Free Full-Text | Accelerating Neural Network Inference on FPGA-Based Platforms—A Survey

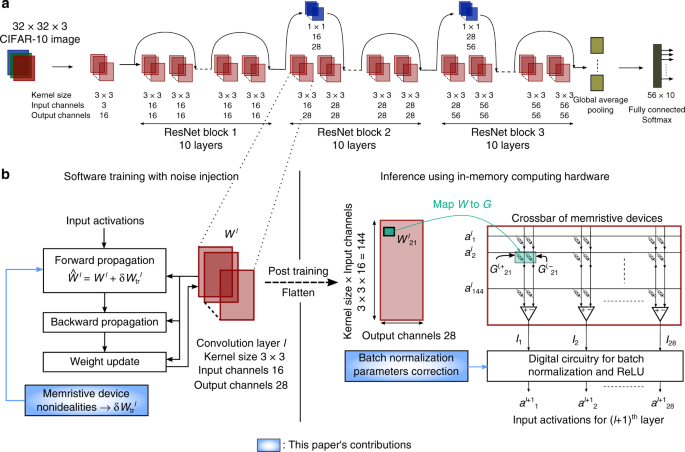

Accurate deep neural network inference using computational phase-change memory | Nature Communications

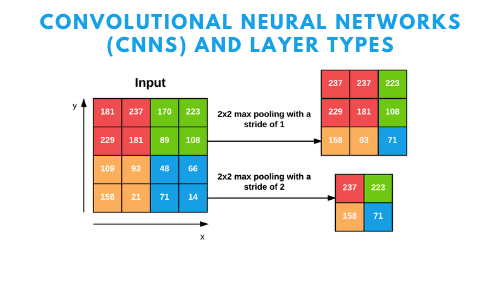

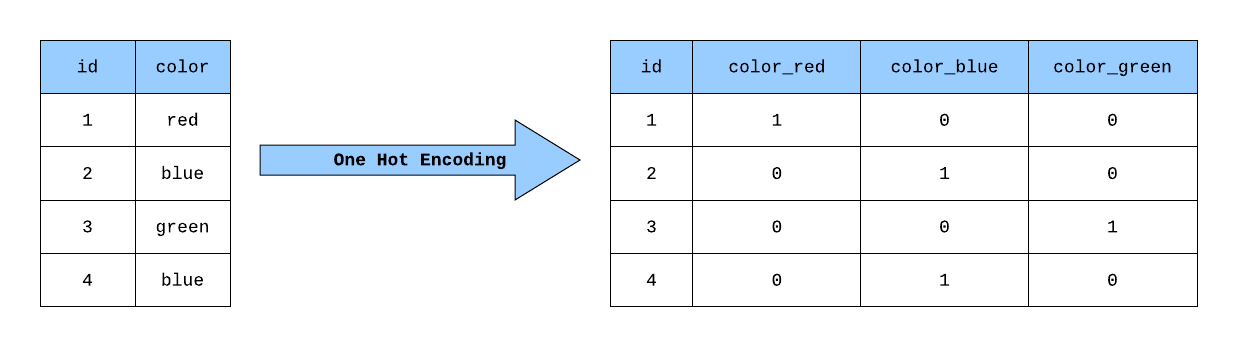

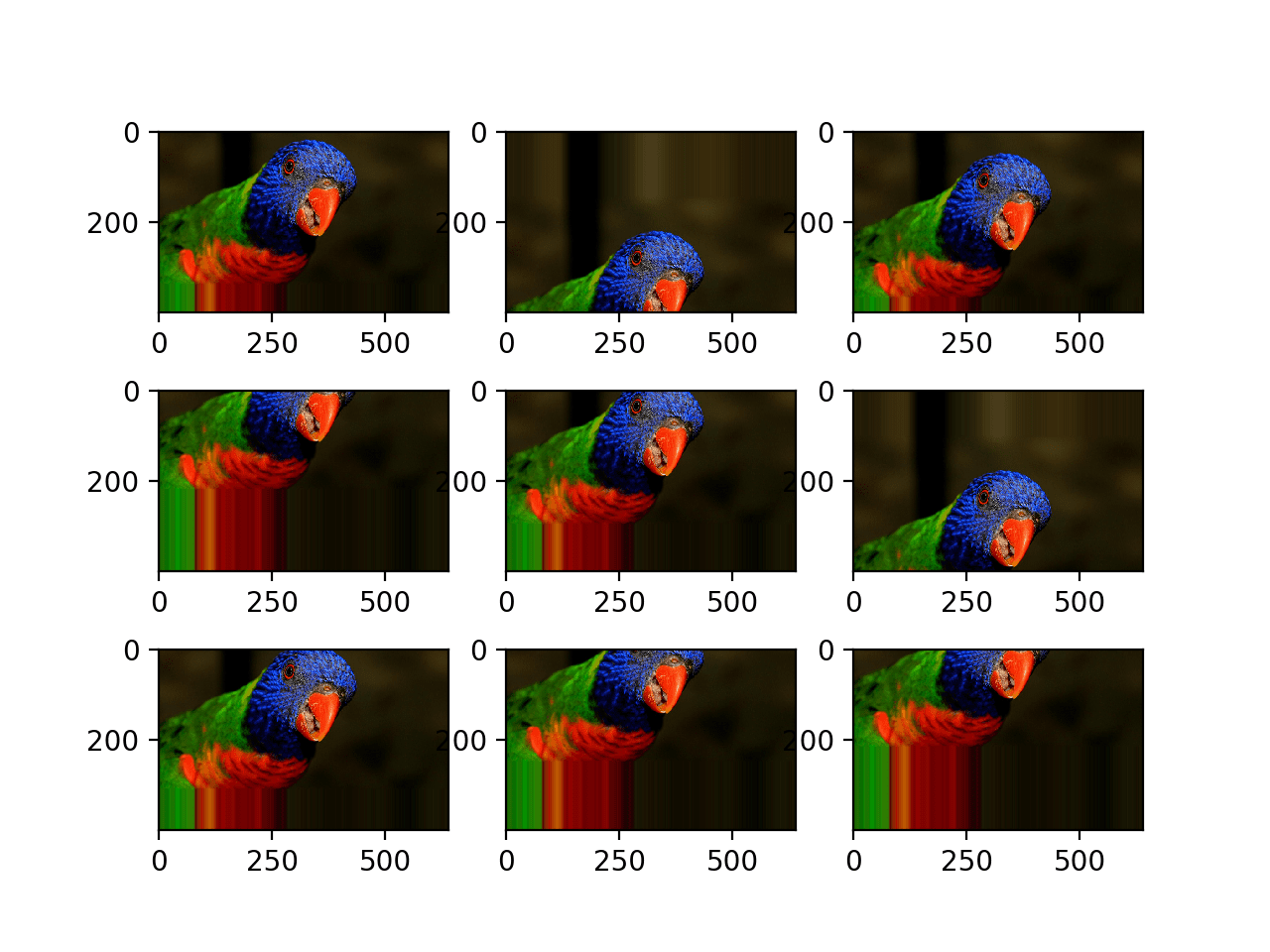

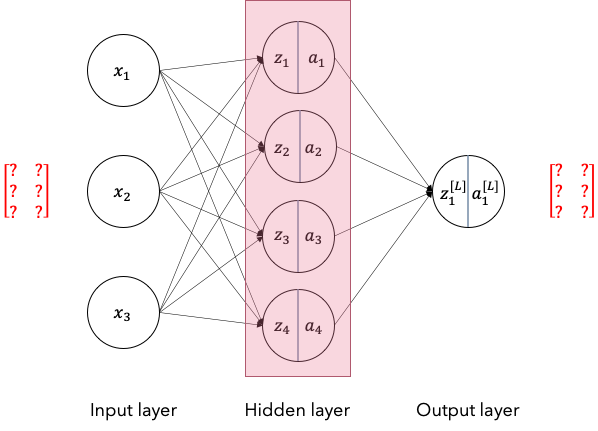

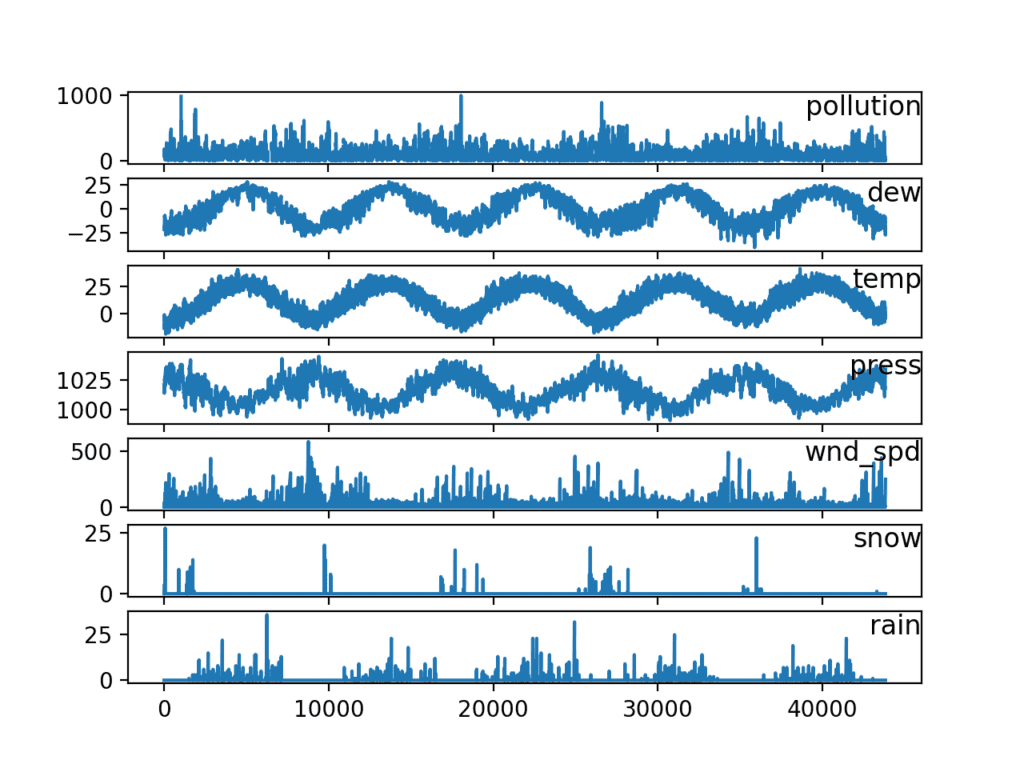

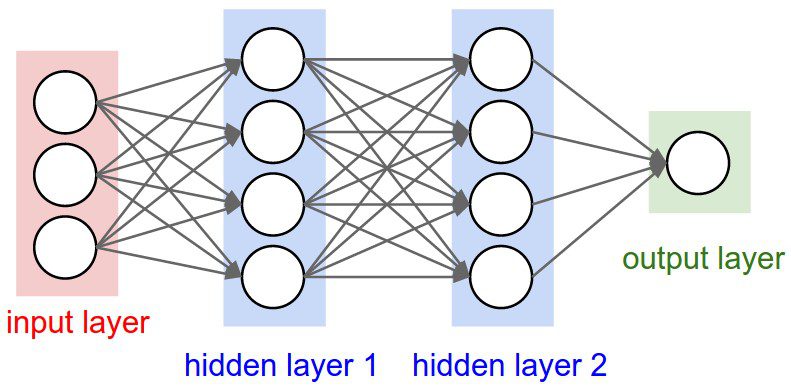

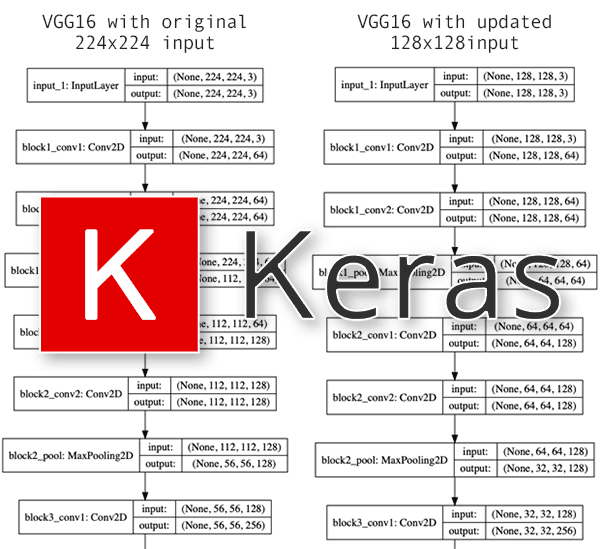

Ultimate Guide to Input shape and Model Complexity in Neural Networks | by Chetana Didugu | Towards Data Science